Data Flow Model

Data Flow Model Definition

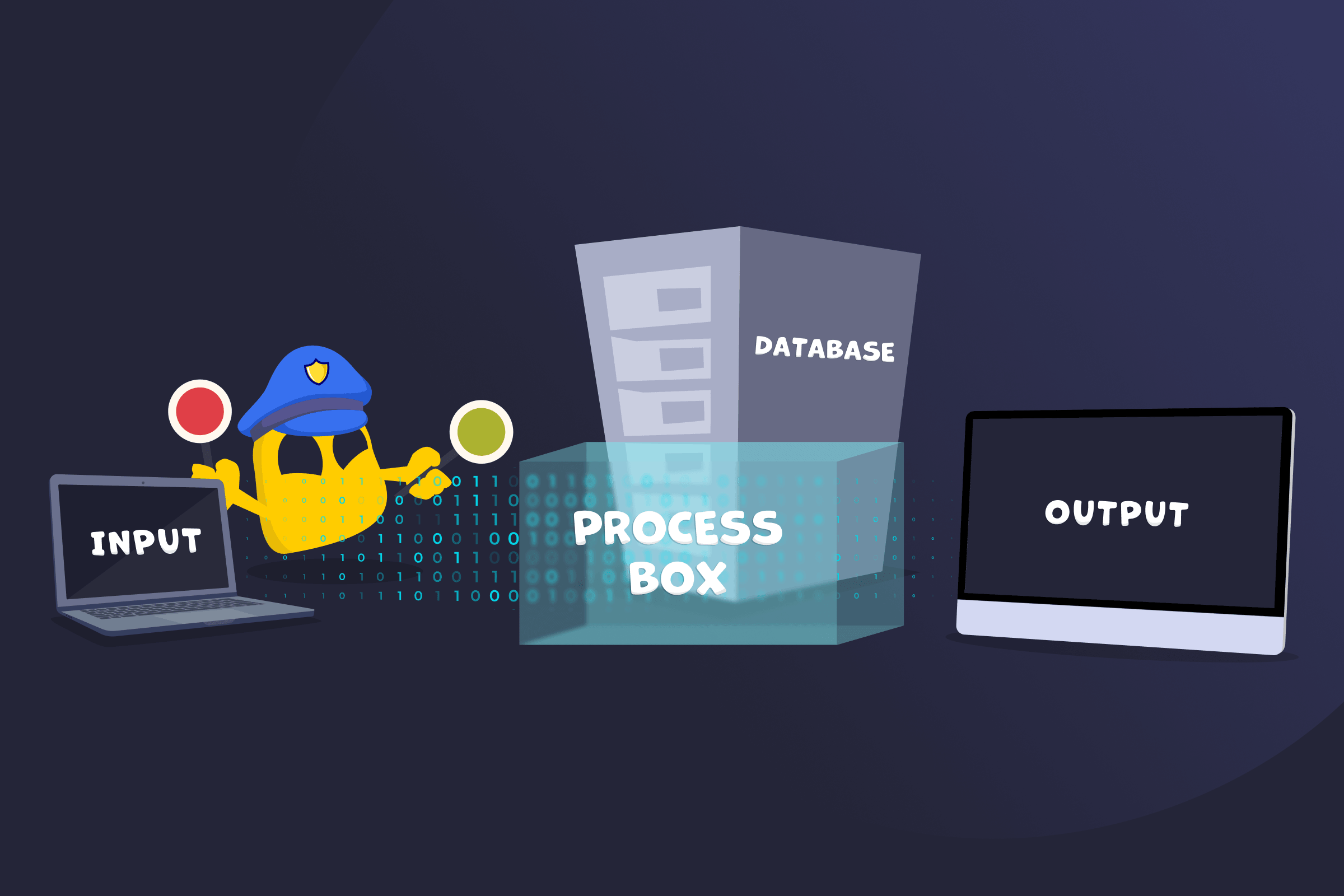

A data flow model describes how data moves through a system and how it’s handled at each step. It shows where data enters, how it's processed and stored, and where it goes next. A data flow model is usually presented as a diagram that uses standard symbols, such as arrows, circles, and rectangles, to show how data flows between parts of the system. The model includes inputs, processes, data stores, and outputs, and can be shown at different levels of detail to map data flow clearly.

Key Components of a Data Flow Model

- Inputs: Points where data enters the system, such as user input or external sources.

- Processes: Steps where data is used, transformed, or analyzed.

- Data stores: Locations where data is stored for later use, such as databases or files.

- Outputs: A place where data goes after processing, such as results, reports, or other systems.

- Data flows: Paths that show how data moves between inputs, processes, data stores, and outputs.

Types of Data Flow Models

- Logical data flow model: Shows what the system does and how data moves, without focusing on technical details.

- Physical data flow model: Shows how the system is actually implemented, including hardware, software, and storage.

Benefits of Using a Data Flow Model

- Improves understanding and communication: Gives a clear view of how data moves through a system and helps teams and stakeholders understand it easily.

- Simplifies complex systems: Breaks processes into smaller, easy-to-follow parts.

- Supports better design and planning: Helps structure systems and make decisions before building them.

- Identifies issues early: Reveals gaps, inefficiencies, or unnecessary steps in data handling.

- Supports documentation: Provides a clear reference for how the system works.

- Boosts data breach prevention: Helps identify where data may be exposed and where protection is needed.

Limitations of Data Flow Models

- Lacks implementation detail: Doesn’t show technical details like code, algorithms, or system architecture.

- Doesn’t explicitly define timing or sequence: Focuses on data movement, rather than when or in what order processes occur. However, the flow structure often makes the general sequence of operations easy to infer.

- Can oversimplify complex systems: May miss edge cases or detailed logic in large systems.

- Can become complex at scale: Large systems can lead to crowded, hard-to-read diagrams.

Read More

FAQ

The purpose of a data flow model is to show how data moves through a system and how it's handled at each step. It helps explain where data comes from, how it's processed, where it's stored, and where it goes. This makes it easier to understand how a system works, plan its design, and identify gaps or issues in how data is handled.

You should use a data flow model when you need to understand, design, or explain how data moves through a system. It's useful during system planning, analysis, and improvement to map how data enters, is processed, stored, and leaves the system. It also helps when identifying inefficiencies, documenting workflows, or reviewing how data is handled for accuracy and security.

A data flow model should be as detailed as needed to clearly show how data moves through a system, but not so detailed that it becomes hard to understand. It usually starts with a high-level view and can be broken down into more detailed levels if needed. Each level should add clarity by showing specific processes and data flows without including unnecessary technical detail.

45-Day Money-Back Guarantee

45-Day Money-Back Guarantee